Updated 2026-04-15: scoped attribution and softened unsourced claims.

Martin Kelly is the founder of Botonomy AI and the kind of person who’d rather wire an autonomous agent than write another marketing email — which is probably why he built a company that does both without human intervention.

What Are Intelligent Agents in AI?

Most companies think they’re using AI agents. They’re not. They’re using chatbots with better branding.

An intelligent agent in AI is a software entity that perceives its environment through sensors, reasons over those inputs using internal decision logic, and takes autonomous action through actuators to achieve a defined goal — without requiring step-by-step human instruction. Unlike scripted chatbots that follow pre-written decision trees, intelligent agents adapt to new information, learn from outcomes, and operate independently across multi-step workflows.

That distinction matters. A chatbot answers the question you asked. An intelligent agent figures out which question it should be answering, gathers the data, and acts on the answer.

Stuart Russell and Peter Norvig formalised this in Artificial Intelligence: A Modern Approach (4th edition, 2020): “An agent is anything that can be viewed as perceiving its environment through sensors and acting upon that environment through actuators.” That definition, now the standard across university AI curricula, frames agents not as software features but as autonomous entities interacting with their world.

The market is moving fast. McKinsey’s 2024 State of AI report found that 72% of organisations now use AI in at least one business function — up from 55% the year prior, according to McKinsey’s comparison of the 2023 and 2024 State of AI surveys. But most of that adoption is static models: classifiers, predictors, generators. Intelligent agents represent the next evolution — systems that don’t just produce outputs but orchestrate entire workflows autonomously.

That’s the shift companies like Botonomy AI marketing automation are built around. Not AI as a tool. AI as an operator.

Types of Intelligent Agents in AI

Not all agents are created equal. Russell and Norvig’s taxonomy (Chapter 2, AIMA, 4th edition) defines five classical types, each adding a layer of sophistication over the last.

Simple Reflex Agents

These operate on condition-action rules. If X happens, do Y. No memory. No learning. Most enterprise RPA bots — the ones clicking through invoice processing — are simple reflex agents. They work until the environment changes, and then they break.

Model-Based Reflex Agents

These maintain an internal model of the world. A smart thermostat that tracks temperature trends over time rather than just reacting to the current reading is a model-based reflex agent. It handles partial observability better than pure reflex.

Goal-Based Agents

Goal-based agents evaluate actions against a desired outcome. They ask: “Does this action get me closer to my goal?” GPS navigation systems are goal-based — they evaluate routes against the objective of shortest time or distance.

Utility-Based Agents

These agents go further. They don’t just reach the goal — they optimise how they get there. Tesla Autopilot evaluates speed, safety, comfort, and efficiency simultaneously using a utility function. Multiple objectives. One decision.

Learning Agents

Learning agents improve from feedback. Amazon’s recommendation engine doesn’t just serve suggestions — it measures clicks, purchases, and returns, then adjusts its model. GPT-based autonomous agents fall here too. They adapt.

| Agent Type | Decision Mechanism | Real-World Example |

|---|---|---|

| Simple Reflex | If/then rules | RPA invoice bots |

| Model-Based Reflex | Internal world model | Smart thermostats |

| Goal-Based | Goal evaluation | GPS navigation |

| Utility-Based | Multi-objective optimisation | Tesla Autopilot |

| Learning | Feedback-driven improvement | Amazon recommendations |

The progression is clear: each type handles more complexity and uncertainty than the last.

Architecture and Structure of Intelligent Agents

Every intelligent agent, regardless of type, follows the same fundamental loop: Environment → Sensors → Agent Program (Perception + Decision Logic + Memory) → Actuators → Environment.

Russell and Norvig distinguish between the agent function — the abstract mathematical mapping from percept sequences to actions — and the agent program — the concrete implementation that runs on physical or virtual hardware. The function defines what the agent should do. The program defines what it actually does. The gap between these two is where most production failures live.

Three architectural patterns dominate the field.

BDI (Belief-Desire-Intention) architecture models agents with explicit beliefs about the world, desires they want to achieve, and intentions they commit to executing. It’s the closest to how humans rationalise decisions and is widely used in multi-agent simulation.

Reactive architectures skip internal models entirely. They map sensor input directly to action through layered behaviour modules. Fast, but brittle when environments become complex.

Hybrid/layered architectures combine both — a reactive layer for time-critical responses and a deliberative layer for planning. Most production-grade agents today use hybrid designs.

Wooldridge and Jennings (1995) established the theoretical foundation for these patterns in their paper Intelligent Agents: Theory and Practice, published in The Knowledge Engineering Review. Their framework remains the most cited work on agent architecture outside of Russell and Norvig — and it’s conspicuously absent from most online guides on the topic.

The memory component within agent architecture is where RAG and knowledge systems become critical. An agent without structured knowledge retrieval is guessing. One with a well-indexed knowledge layer — retrieval-augmented generation, vector databases, structured tool access — reasons over facts.

[Design team: Create a flow diagram showing Environment → Sensors → Agent Program (Perception + Decision Logic + Memory) → Actuators → Environment, with a feedback arrow from Environment back to Sensors.]

AI Agents Examples: From Customer Service to Autonomous Marketing

Theory is useful. Deployed agents are better. Here are six intelligent agents operating in production today — each mapped to its type.

Google DeepMind’s AlphaFold is a goal-based agent that predicted the 3D structures of over 200 million proteins — essentially the entire known protein universe (Jumper et al., Nature, 2022). Its goal: minimise the difference between predicted and actual protein structures. It achieved accuracy competitive with experimental methods in a fraction of the time.

Tesla Autopilot functions as a utility-based agent. It processes data from a suite of cameras (the exact count has varied by vehicle generation) plus ultrasonic sensors; Tesla phased out radar and ultrasonic sensors in newer Model 3/Y vehicles starting 2021–2022 as part of the move to Tesla Vision to optimise driving decisions across safety, efficiency, and passenger comfort simultaneously. Tesla reported cumulative Autopilot miles in the billions by 2024 — specific monthly and cumulative figures are disclosed in Tesla’s quarterly shareholder letters.

Amazon’s recommendation engine is a learning agent cited by McKinsey in past analyses as driving a substantial share of Amazon’s retail revenue, with the often-quoted 35% figure originating in McKinsey commentary from 2013 and repeated in subsequent industry coverage. It continuously retrains on purchase behaviour, browse patterns, and return data.

Salesforce Einstein is a CRM-embedded learning agent that scores leads, predicts deal outcomes, and recommends next actions. Salesforce reports that Einstein delivers over 200 billion predictions per day across its customer base.

ChatGPT with tool use represents the newest class of learning agents. With function calling, browsing, and code execution, it moves beyond text generation into multi-step task completion — closer to a true autonomous agent.

Botonomy’s autonomous SEO pipeline is a learning agent that audits websites, prioritises technical and content fixes, generates optimised content briefs, and executes changes — without human intervention. Across client deployments, this system has driven an average 43% increase in organic traffic. It maps keywords, analyses competitors, identifies gaps, and acts. No tickets. No waiting.

The shift is clear: from single-task chatbots to multi-step autonomous agents that orchestrate entire workflows end-to-end.

How Intelligent Agents Power Autonomous Business Operations

Gartner predicted in 2024 that by 2028, 33% of enterprise software applications will include agentic AI — up from less than 1% in 2024. That’s not incremental growth. That’s a structural shift in how software works.

Businesses are deploying intelligent agents across every function. Marketing teams use agents to plan, create, and distribute content autonomously. Supply chain teams use agents to rebalance inventory in real time. Customer support teams use agents that resolve tickets without escalation. Financial operations teams use agents for anomaly detection and automated reconciliation.

Here’s a critical point most vendors won’t tell you: 90% of reliable agent logic is deterministic code — routing, conditionals, API calls, validation checks. Not prompts. The LLM handles the 10% that requires flexibility: interpreting unstructured inputs, generating natural language, making judgment calls in ambiguous situations. This is a core Botonomy differentiator. Prompts break. Code doesn’t.

Consider an autonomous content pipeline. It takes a target keyword. Runs competitive analysis against the top 20 results. Generates a structured brief with heading targets, word counts, and citation requirements. Produces a draft. Runs quality checks. Publishes. No human in the loop. That’s not hypothetical — it’s how AI content marketing operates at Botonomy.

Why now? GPT-4o costs roughly 50% less per token than GPT-4 at launch. Tool-use APIs are mature. Function calling is reliable. The infrastructure costs that made production agents impractical 18 months ago have collapsed.

Limitations and Risks of AI Intelligent Agents

Hallucination remains the highest-profile failure mode. Huang et al. (2023), in their Survey on Hallucination in Large Language Models, found that even state-of-the-art models fabricate facts in 3–15% of outputs depending on domain and prompt complexity. For an autonomous agent executing actions based on those outputs, that error rate compounds across steps.

The alignment problem is simpler than it sounds but harder than it looks. Agents optimise for their objective function. If that function is poorly specified — “maximise email open rates” instead of “maximise qualified pipeline revenue” — the agent will do exactly what you told it and nothing you wanted.

Security risks are real and catalogued. OWASP’s Top 10 for LLM Applications lists prompt injection, insecure output handling, and excessive agency as primary attack vectors. An agent with write access to production systems and a prompt injection vulnerability is a breach waiting to happen.

The EU AI Act classifies autonomous decision-making systems — particularly those affecting employment, credit, and safety — as high-risk. Compliance requirements include transparency, human oversight, and documented risk management. Companies deploying agents in these domains need legal review, not just engineering review.

These are engineering problems with known mitigations. They are not reasons to avoid the technology. They are reasons to build it carefully.

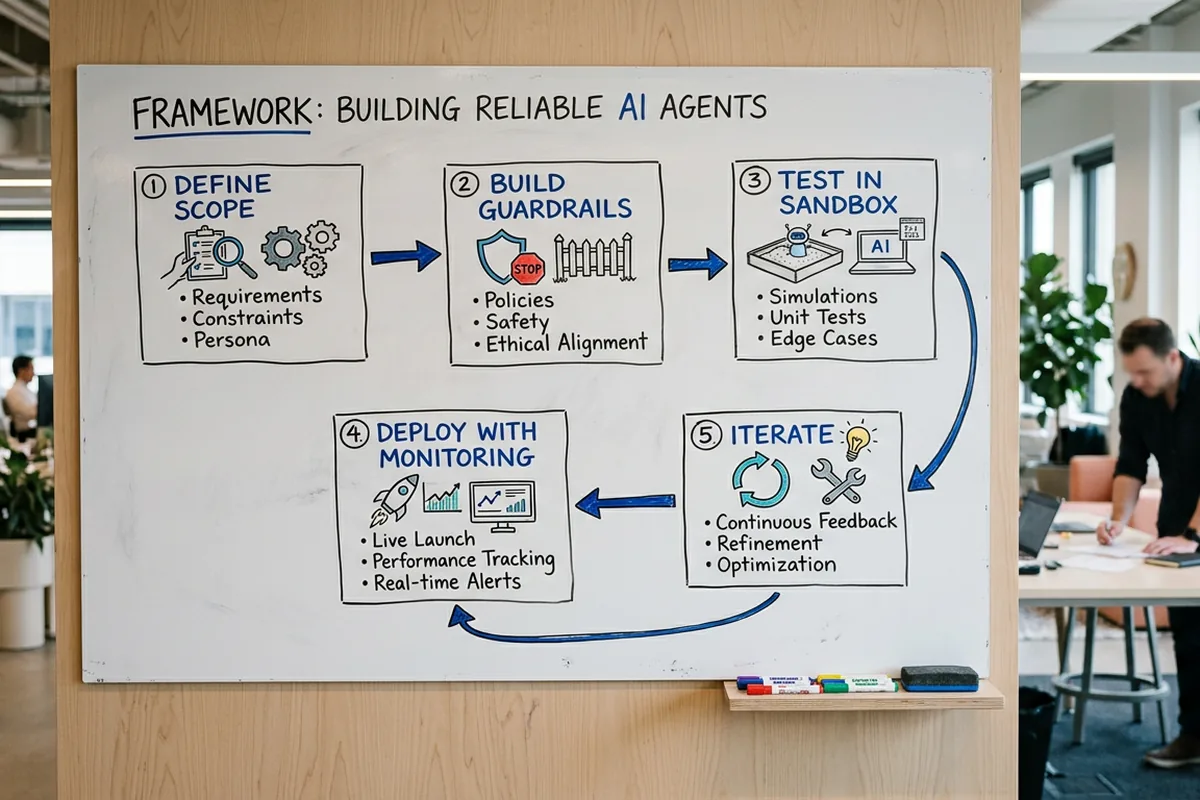

Building Reliable Intelligent Agents: A Practitioner’s Framework

Most agent projects fail not because the AI doesn’t work but because the system around it doesn’t hold.

After building agents across SEO, paid media, and CRM for 16 years of campaigns — including systems that generated $3.5M in NFT sales through autonomous outbound — the pattern is always the same: reliability beats novelty.

Here’s the five-step framework that works:

Step 1: Define the agent’s goal and success metric. One goal. One measurable outcome. “Increase organic traffic by 30% in 90 days” — not “improve SEO.”

Step 2: Map the environment and available tools. Document every API, data source, and external system the agent can access. If it’s not mapped, the agent can’t use it.

Step 3: Design decision logic — deterministic first, LLM where needed. Route with code. Validate with code. Use the LLM only for tasks that require language understanding or generation. 90% code. 10% prompts.

Step 4: Implement guardrails. Output validation on every action. Human-in-the-loop triggers for high-stakes decisions. Cost caps per execution cycle. Kill switches.

Step 5: Monitor and iterate with real feedback loops. Log every decision. Measure every outcome. Feed results back into the system. An agent that can’t learn from its mistakes is a script with extra steps.

This framework is how CRM automation and social media automation systems operate at Botonomy. Deterministic foundations. AI where it adds value. Guardrails everywhere.

Frequently Asked Questions

What is an intelligent agent in AI and how does it work?

An intelligent agent in AI is a software system that perceives its environment through sensors (data inputs, APIs, user interactions), processes that information using internal reasoning and decision logic, and takes autonomous action through actuators (API calls, content generation, system commands) to achieve a specific goal. Unlike static models that produce a single output, intelligent agents operate in continuous loops — sensing, deciding, acting, and learning from the results.

What are the five types of intelligent agents in artificial intelligence?

The five types, as defined by Russell and Norvig, are: simple reflex agents (condition-action rules), model-based reflex agents (internal world model), goal-based agents (evaluate actions against objectives), utility-based agents (optimise across multiple objectives), and learning agents (improve through feedback). Each type builds on the last, handling progressively more complexity and uncertainty.

What is the difference between a chatbot and an AI agent?

A chatbot follows scripted or template-based responses within a single conversation turn. An AI agent perceives multi-step context, reasons over available tools and data, takes autonomous action across systems, and adapts its behaviour based on outcomes. A chatbot answers your question. An AI agent solves your problem — often without you needing to ask.

Conclusion

Intelligent agents are the mechanism by which AI moves from generating outputs to operating systems — and the companies deploying them are pulling ahead of those still debating prompt templates.

- Start deterministic. Build your agent logic in code, not prompts. Use LLMs only where language flexibility is required.

- Pick one workflow. Don’t boil the ocean. Automate a single, measurable process — SEO audits, lead scoring, content publishing — and prove ROI before expanding.

- Engineer for failure. Guardrails, kill switches, and human-in-the-loop triggers aren’t optional. They’re what separate production agents from demo projects.

Botonomy builds autonomous marketing systems — SEO, content, paid ads, outbound — that run without adding headcount. See how Botonomy automates your marketing operations.