Updated 2026-04-15: corrected CrewAI built-in tracing claim (CrewAI has native tracing and observability).

Martin Kelly is the founder of Botonomy AI and the kind of builder who’d rather read a GitHub diff than a press release — especially when it involves agent orchestration frameworks that actually ship.

What Is the OpenAI Agents SDK and Why Does It Matter?

Most teams still treat LLMs like glorified autocomplete. One prompt in, one response out. That pattern breaks the moment you need multi-step reasoning, tool use, or coordination between specialized models.

The open ai agents sdk is a lightweight, open-source Python framework developed by OpenAI for building agentic AI applications that combine tool use, agent-to-agent handoffs, guardrails, and built-in tracing. It replaces OpenAI’s earlier experimental Swarm framework with a production-oriented architecture released under the MIT license. The full source lives on GitHub at openai/openai-agents-python.

OpenAI released this SDK because the industry is shifting from single-call LLM interactions to multi-step agent orchestration. Their official announcement framed it explicitly: agents are the next interface layer. Not chatbots. Not copilots. Autonomous systems that plan, execute, and self-correct.

This matters for the broader ecosystem because it signals OpenAI’s commitment to owning the orchestration layer — not just the model layer. The SDK is minimal by design. No sprawling abstraction hierarchy. No magic. Just primitives you compose into workflows.

From a practitioner’s standpoint, the real value is deterministic control. You define exactly which agent handles which task, exactly which tools are available, and exactly when execution should halt. This is how Botonomy AI marketing automation approaches agent systems: code-driven pipelines where the LLM handles language tasks and everything else stays predictable.

The hype cycle around AI agents is loud. The SDK is quiet. That’s why it’s worth understanding deeply.

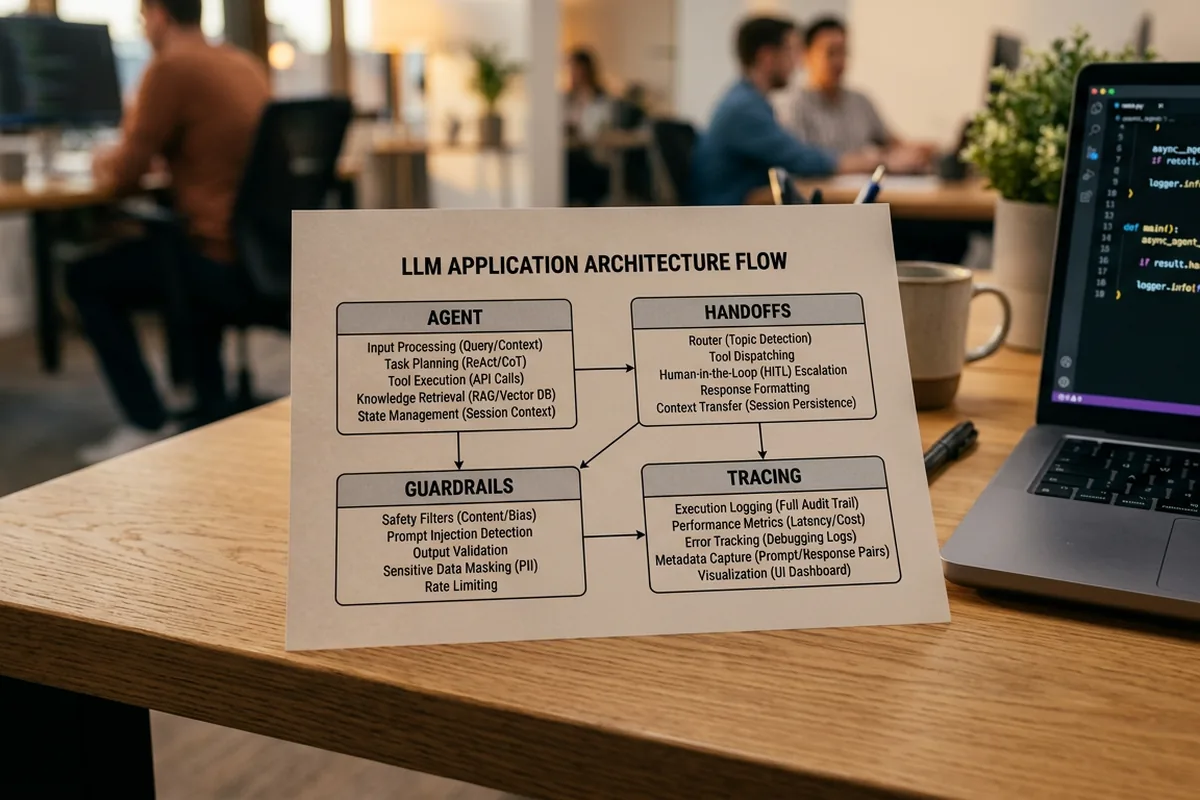

Core Architecture: Agents, Handoffs, Guardrails, and Tracing

Four primitives. That’s it. The entire OpenAI Agents SDK architecture rests on four building blocks, and understanding them removes 90% of the confusion around how the framework operates.

Agent

An Agent is a configuration object combining three things: a set of natural-language instructions, a model identifier (e.g., `gpt-4o`), and a list of available tools. Think of it as a specialized worker with a job description and a toolbox. According to the official Python documentation, agents are stateless — they don’t retain memory between runs unless you explicitly manage context.

Handoff

A Handoff enables one agent to delegate control to another. This is how multi-agent workflows function. A triage agent evaluates user intent, then hands off to a billing agent, a technical support agent, or a content specialist. Handoffs are typed and explicit — no ambiguous routing.

Guardrail

Guardrails validate inputs and outputs before and after agent execution. They run in parallel with the agent and can either filter content (validation guardrails) or halt execution entirely (tripwire guardrails). This is not optional safety theater. It’s the mechanism that prevents a production agent from executing on malformed or adversarial input.

Tracing

Built-in observability ships with the SDK. Every agent run produces a trace — a structured log of each step, tool call, handoff, and LLM interaction. OpenAI’s tracing integrates with their dashboard, but the architecture supports custom trace processors for third-party observability tools.

The Runner loop ties everything together. When you call `Runner.run()`, the SDK enters a while-loop that repeatedly calls the active agent’s model, executes tool calls, processes handoffs, and checks guardrails — until the agent produces a final output or hits `max_turns`. This loop is the heart of the openai sdk’s agent execution model.

The tool system deserves specific attention. The SDK supports three tool types: function tools (Python functions decorated with `@function_tool`), hosted tools like `code_interpreter` and `file_search`, and MCP-compatible tool servers that follow the Model Context Protocol. The `file_search` hosted tool connects directly to RAG and knowledge systems, enabling agents to retrieve and reason over uploaded documents without custom retrieval code.

Shyamal Anadkat from OpenAI’s DevRel team has noted on the project’s GitHub discussions that the SDK’s design philosophy prioritizes “being close to the metal” — minimal wrappers, maximum transparency. That philosophy shows in the codebase.

OpenAI Agents SDK Python: Hands-On Code Walkthrough

Code that doesn’t run is documentation debt. Every example below is executable with `pip install openai-agents` and a valid OpenAI API key.

Minimal Agent with a Tool

from agents import Agent, Runner, function_tool

import asyncio

@function_tool

def get_word_count(text: str) -> int:

"""Returns the word count of a given text."""

return len(text.split())

agent = Agent(

name="Writing Assistant",

instructions="You help users analyze their text. Use tools when appropriate.",

tools=[get_word_count],

)

result = asyncio.run(Runner.run(agent, input="How many words are in: 'The quick brown fox jumps over the lazy dog'"))

print(result.final_output)

This openai agents sdk python example defines a function tool, attaches it to an agent, and runs a single query. The `Runner.run()` call handles the full execution loop.

Multi-Agent Handoff

from agents import Agent, Runner

import asyncio

billing_agent = Agent(

name="Billing Specialist",

instructions="You handle billing inquiries. Provide clear, specific answers about invoices and payments.",

)

technical_agent = Agent(

name="Technical Support",

instructions="You resolve technical issues. Ask clarifying questions before suggesting fixes.",

)

triage_agent = Agent(

name="Triage Agent",

instructions="Determine if the user needs billing help or technical support, then hand off.",

handoffs=[billing_agent, technical_agent],

)

result = asyncio.run(Runner.run(triage_agent, input="I got charged twice on my last invoice"))

print(result.final_output)

The triage agent reads user intent and delegates. No routing logic in your code — the LLM decides based on instructions. But the handoff targets are explicit and bounded.

Input Guardrail

from agents import Agent, Runner, InputGuardrail, GuardrailFunctionOutput

import asyncio

async def check_for_pii(ctx, agent, input_text):

contains_email = "@" in input_text

return GuardrailFunctionOutput(

output_info={"contains_email": contains_email},

tripwire_triggered=contains_email,

)

guarded_agent = Agent(

name="Safe Agent",

instructions="You answer general knowledge questions.",

input_guardrails=[InputGuardrail(guardrail_function=check_for_pii)],

)

try:

result = asyncio.run(Runner.run(guarded_agent, input="My email is test@example.com"))

except Exception as e:

print(f"Guardrail triggered: {e}")

The guardrail fires before the agent processes the input. Tripwire guardrails raise an exception and halt execution entirely. This is how you build safety into the pipeline layer, not the prompt layer.

How the Agents SDK Compares to LangChain, CrewAI, and Anthropic’s Approach

Choosing an agent framework isn’t a features checklist — it’s a bet on abstraction philosophy.

LangChain/LangGraph offers the most comprehensive ecosystem. According to LangChain’s documentation, it provides hundreds of integrations, extensive middleware, and LangGraph for stateful multi-agent orchestration. The trade-off: steeper learning curve, heavier abstractions, and more surface area for bugs. Debugging a LangChain chain often means reading framework internals.

CrewAI takes a role-based approach to multi-agent design. Per CrewAI’s docs, agents are assigned roles, goals, and backstories — more opinionated and structured. It’s effective for teams that want prescriptive patterns, less so for teams that need granular control.

Anthropic has published detailed agent design patterns but hasn’t released a formal anthropic agents sdk. Developers searching for one will find architectural guidance, not a framework. Anthropic’s approach delegates orchestration to the developer.

The openai agent sdk github repo’s own description calls it a “lightweight, powerful framework for multi-agent workflows.” That’s accurate. It’s the thinnest abstraction of the four approaches.

| Feature | OpenAI Agents SDK | LangChain/LangGraph | CrewAI | Anthropic |

|---|---|---|---|---|

| Abstraction level | Low | High | Medium | N/A (patterns only) |

| Multi-agent support | Handoffs | LangGraph graphs | Role-based crews | DIY |

| Built-in tracing | Yes | Via LangSmith | Yes (built-in) | No |

| Model flexibility | OpenAI default, configurable | Multi-provider | Multi-provider | Claude only |

| Learning curve | Low | High | Medium | Varies |

The honest trade-off: the OpenAI SDK defaults to OpenAI models. The `model_provider` configuration allows non-OpenAI models, but the SDK is optimized for OpenAI’s API shape. If model portability is critical, LangChain offers more flexibility today.

Production Patterns: Deploying Agents That Don’t Break at Scale

Shipping a demo agent takes an afternoon. Shipping a production agent that handles 10,000 daily runs without hallucinating, timing out, or draining your API budget takes engineering discipline.

Set `max_turns` explicitly. Every `Runner.run()` call should include a `max_turns` parameter. Without it, a misbehaving agent can loop indefinitely. Default to the lowest number that covers your expected workflow depth — typically 5–10 for most use cases.

Track token usage through tracing. The SDK’s built-in tracing logs every LLM call, including token counts. Pipe these traces into your cost monitoring system. A single runaway agent with GPT-4o can easily burn through tens of dollars in API costs within minutes if tool calls cascade unexpectedly.

Use Temporal for durable execution. The Temporal engineering team published a detailed integration guide showing how to wrap Agents SDK runs in Temporal workflows. This gives you retry logic, timeout handling, and execution persistence for free. It’s the strongest third-party endorsement of the SDK’s production readiness I’ve seen.

Treat guardrails as infrastructure, not features. Tripwire guardrails that halt execution on bad input are your circuit breakers. Validation guardrails that filter output are your quality gates. Deploy both.

Here’s what I’ve learned building autonomous SEO pipeline systems at Botonomy: 90% of the logic should be deterministic code — data fetching, validation, transformation, routing. The LLM handles the remaining 10%: language generation, intent classification, content analysis. The Agents SDK enables this exact pattern. Agents orchestrate, but code decides.

Building Marketing Agents: A Practical Use Case

A three-agent marketing pipeline built on the OpenAI Agents SDK today — not hypothetically — works like this.

Agent 1: SEO Auditor. Takes a URL, calls function tools that fetch page metadata, check heading structure, analyze keyword density, and pull Core Web Vitals data. Produces a structured audit report.

Agent 2: Content Strategist. Receives the audit report via handoff. Uses its instructions to identify content gaps, recommend target keywords, and generate a brief. No web browsing — it works from the audit data and its training knowledge.

Agent 3: Content Writer. Receives the brief via second handoff. Generates draft content following the strategic recommendations. Guardrails validate output length, keyword inclusion, and brand tone adherence before returning results.

Each agent has narrow, bounded responsibilities. The handoff chain is deterministic. The LLM does what LLMs are good at — language — while the pipeline structure handles workflow logic.

This is exactly how Botonomy approaches AI content marketing: deterministic agent orchestration for content, CRM enrichment, and outbound sequencing. Real pipelines, real outputs, real ROI tracking.

What’s Next for the OpenAI Agents SDK

The SDK’s GitHub repo shows consistent weekly commits and an active issue tracker — this isn’t a launch-and-forget project. OpenAI is iterating fast.

The TypeScript/JavaScript version (openai-agents-js) opens the SDK to full-stack teams building agent interfaces in Next.js, Node, or Deno environments. Same primitives, different runtime.

MCP (Model Context Protocol) integration is expanding tool interoperability. As more tool providers publish MCP-compatible servers, the SDK’s tool ecosystem grows without OpenAI writing custom integrations.

The bigger signal: OpenAI is betting on agents as the primary interface for AI applications. The SDK isn’t a side project — it’s infrastructure. Follow updates on the Botonomy blog as we continue building on and writing about these systems.

Conclusion

The OpenAI Agents SDK gives you the thinnest viable abstraction for building multi-agent systems — and that’s its greatest strength.

- Start with the four primitives: Agents, Handoffs, Guardrails, and Tracing cover 95% of agent orchestration needs.

- Keep LLM usage narrow: let deterministic code handle routing, validation, and data — agents handle language.

- Deploy guardrails as infrastructure: tripwire guardrails and `max_turns` limits prevent production failures before they start.

If you’re building agent-powered marketing systems — or want them built without adding headcount — talk to us. Botonomy runs autonomous SEO, content, and outbound pipelines on deterministic agent architectures. No prompts-and-prayers. Botonomy.ai